Artificial Intelligence, Social Media, and Political Violence Prevention

This project uses new Artificial Intelligence (AI) tools for analyzing manipulated political memes on social media, an important source of disinformation and a contributor to political instability. The project seeks to identify and track manipulative, false, and dehumanizing messaging on high stakes issues in political discourse, and aims to empower policymakers, human rights organizations, and journalists by providing them with relevant information in near-real time to help prevent political instability and human rights violations.

The Challenge

Social media has become a new battleground in contemporary political conflicts. In our current social media landscape, harmful and manipulative political content circulates more rapidly and widely than ever before, and the dangers are especially acute. This is especially evident in the use of political memes: multimedia content meant to engage an in-group and/or antagonize an out-group for political ends and spread primarily through social media.

For example, in the lead up to the 2019 Indonesian elections, Instagram and Twitter were filled with conspiratorial allegations about treasonous politicians who had to be prevented from winning at the ballot box by any means, including through terror, threats, and killings.

- In Myanmar, ongoing government atrocities against the Rohingya minority group have been justified and fueled by nationalist memes on Twitter and Facebook. The Rohingya are accused of being dangerous foreigners and an existential threat to the integrity and survival of the country that must be eradicated. The 2021 coup was also supported by a wide-ranging social media effort to legitimize and consolidate military rule.

- In Colombia, continuing instability following the 2016 Peace Accord between rebels and the government has been partly fueled by dehumanizing and misleading social media posts about political opponents, which increased around the 2022 national elections and continues today.

- In Ukraine, Russia's 2022 military invasion and its prior support for separatist forces has been accompanied by significant political disinformation campaigns across social media. These campaigns have legitimized violence and undermined factual reporting, accountability, and peacebuilding efforts across the region.

A Way Forward

Integrating social media analysis into early warning evaluations may significantly enhance conflict prevention and intervention work.

Social media streams do not necessarily represent a more factually accurate portrayal of what is occurring: their content may be misleading or contain outright lies, and content origin can be hard to identify. However, social media is becoming increasingly important in political conflicts and contentious situations, and politicized social media feeds provide a stream of real-time data that can be analyzed in order to identify trends which signify increased potential for outbreaks of political violence.

AI systems are capable of enhancing the work of peacebuilders in specific and important ways: artificial intelligence provides unique tools for identifying and analyzing emergent trends and threats within massive volumes of real-time data on the Internet, beyond the capacities of most existing political violence early warning systems.

We seek to provide journalists and prevention practitioners—that is, policymakers, analysts, and human rights advocates in the atrocity prevention community—with data-rich, theoretically-informed assessments of violence escalation processes in near real time. This project aims to enhance existing early warning and prevention efforts, and thus strengthen timely and effective prevention by providing important and otherwise missed information.

Our Work

Using our AI technologies, we are building a system that utilizes computer models capable of understanding the ways political actors, both government and non-government, may use social media to dehumanize and provoke violence against opponents.

Our system includes new algorithms as well as infrastructure to monitor multiple social media ecosystems at scale. We are also developing systems with the ability to identify trends across various media modalities, tracking provenance, spread, and malice indicators in video, audio, and text formats.

Our system consists of three primary components:

- Data Ingestion Platform: We draw media content (political memes) from a wide range of data within Internet social media postings on the order of millions of instances, with parameters checked/confirmed with country-specific case specialists. We work with local human rights groups and experts to ensure that major actors, social media accounts, and topics are properly identified.

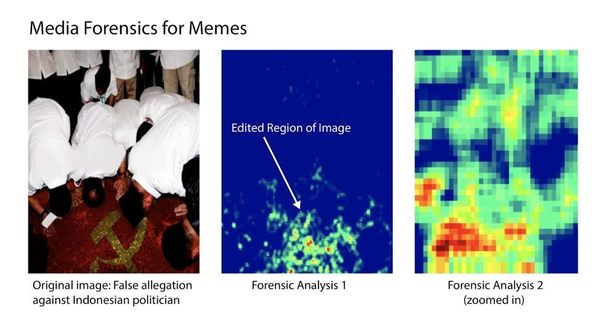

- Artificial Intelligence Analysis Engine: We conduct automated analyses of the large collections of media content assembled at the data ingestion stage by employing state-of-the-art artificial perception methods. Basic features of images, such as style and content, are indexed and used to establish connections and similarities with other images for further querying and analysis.

- User Interface: The final component includes an interface to ensure that information can be shared quickly and efficiently with relevant actors, such as the atrocity prevention and human rights communities.

Guiding Principles

Our efforts are guided by a set of basic principles:

- Harm Reduction: The fundamental aim of this system is to assist efforts to prevent or lessen violence against civilians.

- Transparency: It is crucial that the structure and operation of the system be presented in such a way that the general public can understand what the system entails. Thus, the main aspects of data collection and analysis will be clearly presented and available publicly. We do not rely on surveilling private communications.

- Accessibility: We will prioritize accessibility for actors whose work has a demonstrable commitment to advancing human rights and protecting civilians, such as human rights organizations, research institutes, journalists, and global and regional governance institutions such as the United Nations.

- Independence: We are committed to evidence-based research for the common good. Thus, we endorse independence of analysis and objective reporting of results.

University of Notre Dame Project Researchers

Kristina Hook is assistant professor of conflict management at Kennesaw State University. Her research focuses on large-scale violence against civilians (including genocides and mass atrocities) as well as emerging forms of warfare and violence. She is a 2020 graduate of the Kroc Institute's Ph.D. Program in Peace Studies.

Walter Scheirer is an associate professor in the Department of Computer Science and Engineering at the University of Notre Dame. His research is in the area of artificial intelligence, with a focus on visual recognition, media forensics, and ethics. He is also a Kroc Institute Faculty Fellow.

Tim Weninger is an associate professor in the Department of Computer Science and Engineering at the University of Notre Dame. His research is at the intersection of social media, artificial intelligence, and graphs.

Ernesto Verdeja is an associate professor at the University of Notre Dame holding a joint appointment in the Department of Political Science and the Kroc Institute for International Peace Studies. He is also the executive director of the Institute for the Study of Genocide, a nonprofit human rights organization. His research is on genocide and mass atrocities, transitional justice, and political reconciliation.

Michael Yankoski is a research affiliate with the Department of Computer Science and Engineering at the University of Notre Dame. Michael received his Ph.D from the Kroc Institute for International Peace Studies. His research focuses on the intersection of peace studies, social media, and artificial intelligence, with a specific interest in coordinated disinformation campaigns in volatile political contexts.

Publications

William Theisen, Michael Yankoski, Kristina Hook, Ernesto Verdeja, Walter Scheirer, Tim Weninger (2024). An Avalanche of Images on Telegram Preceded Russia’s Full-Scale Invasion of Ukraine. Arxiv.

Hook, Kristina and Ernesto Verdeja (2022) "Social Media Misinformation and the Prevention of Political Instability and Mas Atrocities," The Henry L. Stimson Center, Washington, D.C.

Thomas, Pamela Bilo, Clark Hogan-Taylor, Michael Yankoski, and Tim Weninger. "Pilot study suggests online media literacy programming reduces belief in false news in Indonesia," First Monday, Volume 27, Number 1 - 3, January 2022. doi: https://dx.doi.org/10.5210/fm.v27i1.11683

Yankoski, Michael, Theisen William, Verdeja, Ernesto, Scheirer, Walter (2021) Artificial Intelligence for Peace: An Early Warning System for Mass Violence. In: Keskin T., Kiggins R.D. (eds) Towards an International Political Economy of Artificial Intelligence.

Yankoski, Michael, Weninger, Tim & Scheirer, Walter (2021) Meme Warfare: AI countermeasures to disinformation should focus on popular, not perfect, fakes.

Yankoski, Michael, Weninger, Tim & Scheirer, Walter (2020) An AI early warning system to monitor online disinformation, stop violence, and protect elections, Bulletin of the Atomic Scientists, 76:2, 85-90, DOI: 10.1080/00963402.2020.1728976

William Theisen, Joel Brogan, Pamela Bilo Thomas, Daniel Moreira, Pascal Phoa, Tim Weninger, and Walter Scheirer. 2020. Automatic Discovery of Political Meme Genres with Diverse Appearances. Cornell University te arXiv:2001.06122

Affiliated University of Notre Dame Entities

- Kroc Institute for International Peace Studies

- Department of Computer Science and Engineering

- Department of Political Science

- Keough School for Global Affairs

- Notre Dame Technology Ethics Center

- Pulte Institute for Global Development